Multiple Modal Features and Multiple Kernel Learning for Human Daily Activity Recognition

- University Of Science, VNU-HCM, Ho Chi Minh City, Viet Nam

- University of Science, VNUHCMC, 227 Nguyen Van Cu Street, Ho Chi Minh, Viet Nam

Abstract

Introduction: Recognizing human activity in a daily environment has attracted much research in computer vision and recognition in recent years. It is a difficult and challenging topic not only inasmuch as the variations of background clutter, occlusion or intra-class variation in image sequences but also inasmuch as complex patterns of activity are created by interactions among people-people or people-objects. In addition, it also is very valuable for many practical applications, such as smart home, gaming, health care, human-computer interaction and robotics. Now, we are living in the beginning age of the industrial revolution 4.0 where intelligent systems have become the most important subject, as reflected in the research and industrial communities. There has been emerging advances in 3D cameras, such as Microsoft's Kinect and Intel's RealSense, which can capture RGB, depth and skeleton in real time. This creates a new opportunity to increase the capabilities of recognizing the human activity in the daily environment. In this research, we propose a novel approach of daily activity recognition and hypothesize that the performance of the system can be promoted by combining multimodal features.

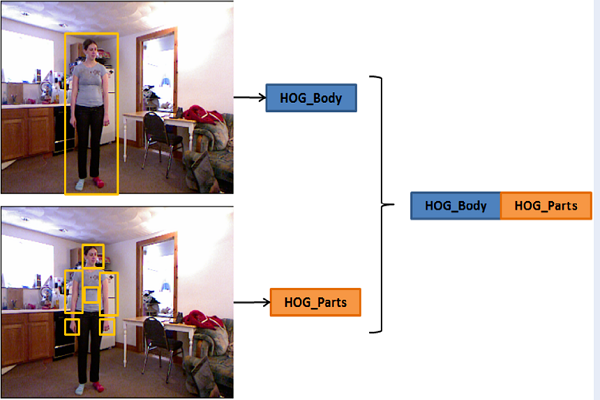

Methods: We extract spatial-temporal feature for the human body with representation of parts based on skeleton data from RGB-D data. Then, we combine multiple features from the two sources to yield the robust features for activity representation. Finally, we use the Multiple Kernel Learning algorithm to fuse multiple features to identify the activity label for each video. To show generalizability, the proposed framework has been tested on two challenging datasets by cross-validation scheme.

Results: The experimental results show a good outcome on both CAD120 and MSR-Daily Activity 3D datasets with 94.16% and 95.31% in accuracy, respectively.

Conclusion: These results prove our proposed methods are effective and feasible for activity recognition system in the daily environment.